How to Track Whether AI Tools Are Recommending Your Business

Track AI recommendations across ChatGPT, Perplexity, Claude, Gemini and more. Learn systematic testing, monitoring cadence, competitive benchmarking, and how.

Summary: Unlike Google search, where you can check your ranking position in seconds, tracking AI recommendations requires systematic testing across multiple platforms. Most B2B companies have no visibility into whether ChatGPT or Perplexity is recommending them. This guide provides a repeatable framework for monitoring your presence in AI-generated recommendations, benchmarking against competitors, and measuring the business impact.

Why Monitoring AI Recommendations Matters

For decades, search visibility has been quantifiable: you rank position one, two, or not at all. Google Search Console tells you exactly where you rank for thousands of queries.

For decades, search visibility has been quantifiable: you rank position one, two, or not at all. Google Search Console tells you exactly where you rank for thousands of queries. This measurability created a feedback loop: companies could track visibility, identify gaps, and improve.

AI recommendations break this feedback loop. If ChatGPT never mentions your company when a prospect asks "What demand generation platforms should I consider?" you have no immediate way to know. Unlike a Google ranking, there's no dashboard, no rank tracker, no clear signal.

This creates a critical business problem: you can't optimise what you can't measure. Without visibility into whether AI is recommending you, you can't:

- Determine if AI visibility is a real problem or a theoretical concern

- Understand which categories and use cases you're missing in

- Benchmark against competitors to understand market positioning

- Measure the impact of content changes on AI recommendations

- Allocate resources between AI optimisation and other priorities

Why This Matters for B2B

For B2B businesses, the stakes are higher than for B2C. A B2B buyer doing research before initiating a sales conversation might ask ChatGPT "What are the leading demand generation platforms?" before visiting Google. If your company is included in that response, you've captured the prospect at a critical moment. If you're not, you might never enter the consideration set.

Prospect research is increasingly happening through AI conversation rather than through Google search. Monitoring this channel is as important as traditional search visibility.

The Challenge of AI Visibility

Unlike Google ranking, AI recommendations face fundamental measurement challenges:

Unlike Google ranking, AI recommendations face fundamental measurement challenges:

Challenge 1: Non-Deterministic Results

The same prompt to ChatGPT can generate different responses depending on:

- When you run the test (LLM models are updated regularly)

- Your ChatGPT settings and conversation history

- Minor variations in prompt wording

- The model version you're using

This means you can't achieve perfectly consistent results. A query that includes you 80% of the time isn't "ranking at position 1" — it's inconsistently recommending you.

Challenge 2: No Public API for Testing

Unlike Google Search Console, there's no official API to query "how many times did ChatGPT recommend me this month?" You have to test manually or use third-party tools that use web scraping or API proxying.

Challenge 3: Multiple Competing Platforms

You need to test across 4-6 major platforms (ChatGPT, Perplexity, Claude, Gemini, Copilot, maybe others). Each has different recommendation patterns and use cases.

Challenge 4: Defining Success

With Google ranking, position one is clearly better than position three. With AI recommendations, is it better to be mentioned early in a response? Named specifically? Referenced in a source list? The value of different recommendation placements isn't standardised.

Challenge 5: Privacy and Testing Infrastructure

Large-scale testing across multiple platforms requires accounting for:

- Rate limits (each platform allows a certain number of queries)

- Authentication (some platforms require accounts)

- Privacy (you may not want to share your testing queries with the platforms)

- Cost (ChatGPT Plus, Perplexity Pro, Claude Pro all have subscription costs for high-volume testing)

Understanding these challenges is the first step to building a realistic, sustainable monitoring approach.

Systematic Prompt Testing Methodology

The most direct way to track AI recommendations is systematic testing: run a set of your target queries through AI platforms, document what gets recommended, and track results over time.

The most direct way to track AI recommendations is systematic testing: run a set of your target queries through AI platforms, document what gets recommended, and track results over time.

Step 1: Define Your Query Set

Build a list of 50-200 queries that represent how prospects research your space. For a demand generation agency, this might include:

Category queries:

- "What is demand generation?"

- "Demand generation vs lead generation"

- "Demand generation tools"

Platform comparison queries:

- "Best demand generation software"

- "Demand generation platforms comparison"

- "[Competitor name] vs [your company]"

Use case queries:

- "Demand generation for B2B SaaS"

- "Demand generation for enterprise software"

- "How to implement demand generation"

Problem-solution queries:

- "How do I generate leads through content?"

- "How do I build a demand generation team?"

- "What skills does a demand generation manager need?"

Industry-specific queries:

- "Demand generation in healthcare tech"

- "Demand generation for fintech companies"

Start with 50 queries in your core category. You'll expand later. Quality over quantity matters — focus on queries that actually influence buying decisions.

Step 2: Design Your Testing Template

Create a simple spreadsheet with columns for:

- Query

- Platform (ChatGPT, Perplexity, Claude, etc.)

- Date tested

- Model version

- Your company mentioned? (Yes/No)

- Position in response (first mention, middle, late, sources list)

- Full response text (optional but valuable)

- Competitors mentioned

- Number of sources cited

Step 3: Run Your Baseline Test

Test all 50 queries across all major platforms. This takes 2-3 hours depending on how many platforms you include. Use a consistent approach:

- Open a fresh browser session (clear cookies, history)

- Run the query exactly as written

- Wait for the full response (important for platforms that stream responses)

- Document whether you're mentioned

- Note the order (first, second, third, sources list, etc.)

- Copy the full response for later analysis

For platforms with different model versions (ChatGPT 3.5 vs 4, for example), test both if they're available to your audience.

Step 4: Establish Your Baseline

After your first comprehensive test, you have a baseline. For each query, you know:

- Does this AI platform mention you? (If not, this is a gap.)

- How prominently? (If you're in the sources list but not in the main response, this signals lower weighting.)

- What else does it recommend? (Competitive intelligence.)

Step 5: Establish Your Testing Cadence

Consistency matters. Set a regular testing schedule:

- Weekly: Test 5-10 priority queries (your highest-intent, highest-priority queries)

- Monthly: Test your full set of 50 queries

- Quarterly: Add 20-50 new queries, expand into adjacent categories

A weekly cadence for priority queries lets you see the impact of content changes quickly. A monthly cadence for your full set tracks overall trends.

Step 6: Automate Where Possible

For testing at scale, use tools:

- Semrush Sensor (Google only, but includes AI Overview tracking)

- Moz Keyword Research (includes AI Overviews)

- SE Ranking (tracks AI Overviews and some LLM mentions)

- Third-party LLM monitoring (emerging tools like Apptio, brands specific tools)

The limitation is that most tools focus on Google's AI Overviews. For ChatGPT, Perplexity, and Claude tracking, manual testing remains the most reliable approach (though this is changing rapidly).

Monitoring Across AI Platforms

Different AI platforms have different recommendation patterns and different importance for your business. Understand each one.

Different AI platforms have different recommendation patterns and different importance for your business. Understand each one.

ChatGPT (OpenAI)

- Market importance: Highest. Most B2B professionals have ChatGPT Plus

- Testing approach: ChatGPT Plus ($20/month) allows unlimited usage. Free ChatGPT has rate limits

- Response structure: Generates 3-5 paragraph answer, often mentions 3-5 resources

- Recommendation style: Lists specific companies/platforms or describes general categories

- Key insight: ChatGPT is less consistent than other platforms; responses vary based on your conversation history

Perplexity

- Market importance: High and growing. Specifically built for research queries

- Testing approach: Free version allows limited searches. Pro ($20/month) allows 600 queries/day

- Response structure: Answers with sources cited throughout; very transparent about attribution

- Recommendation style: Tends to mention 4-8 sources explicitly; heavy use of citations

- Key insight: Perplexity is more transparent about sources; if you're mentioned, you're explicitly cited

Claude (Anthropic)

- Market importance: Growing. Strong adoption in technical and research communities

- Testing approach: Claude.ai free tier allows some usage; Claude Pro ($20/month) allows unlimited

- Response structure: Long-form, nuanced responses; often discusses trade-offs and complexity

- Recommendation style: Less likely to mention specific vendors; more likely to describe categories

- Key insight: Claude emphasises neutrality; heavily vendor-specific lists are less common

Google Gemini

- Market importance: High. Integrated into Google Search (as AI Overviews)

- Testing approach: Free at gemini.google.com; no rate limits

- Response structure: Similar to ChatGPT but often includes links to Google results

- Recommendation style: Sometimes recommends Google Search results directly

- Key insight: Gemini is increasingly integrated into Google products; important for search visibility

Microsoft Copilot

- Market importance: Growing. Integrated into Windows, Office, and Bing

- Testing approach: Free at copilot.microsoft.com; built on GPT-4

- Response structure: Similar to ChatGPT; often pulls from Bing search results

- Recommendation style: Balanced between LLM generation and search results

- Key insight: Important for enterprise customers with heavy Microsoft adoption

Monitoring Strategy by Platform

For most B2B companies:

- Priority 1: ChatGPT and Perplexity (highest usage among B2B researchers)

- Priority 2: Google Gemini and Microsoft Copilot (broad reach, integration with enterprise tools)

- Priority 3: Claude (growing influence in technical segments)

If you operate in specific verticals, add relevant platforms:

- Legal tech: Westlaw's AI-powered tools

- Healthcare: Medical research platforms integrating LLMs

- Financial services: Bloomberg and Reuters AI services

Building Your Monitoring Framework

Create a repeatable, sustainable process for tracking AI recommendations:

Create a repeatable, sustainable process for tracking AI recommendations:

Phase 1: Infrastructure (Week 1)

- Create a spreadsheet template (or use a database tool like Airtable)

- Build your initial query set (start with 50 queries)

- Set up subscriptions to necessary platforms (ChatGPT Plus, Perplexity Pro, etc.)

- Create a shared folder for storing response text and analysis

Phase 2: Baseline Testing (Week 2-3)

- Test all 50 queries across all platforms

- Document each test comprehensively

- Create a summary: "For our top 50 queries, we're mentioned in X% of ChatGPT responses"

- Identify gaps: "We're missing from 80% of platform comparison queries"

Phase 3: Weekly Monitoring (Ongoing)

- Each week, test 5-10 priority queries

- Update your tracking sheet

- Look for week-over-week changes

- Log any model updates or platform changes you notice

Phase 4: Monthly Analysis (Monthly)

- Test your full 50-query set

- Aggregate results: "Week 1: 60% mention rate. Week 2: 62%. Month overall: 61%."

- Identify trends: "Our mention rate is increasing 2% per month"

- Competitive comparison: "Competitor A is mentioned in 85% of platform queries. We're at 45%."

Phase 5: Quarterly Review (Quarterly)

- Expand your query set by 20-50 new queries

- Assess your content changes' impact

- Benchmark against competitors

- Report findings to leadership

Competitive Benchmarking

Tracking your own performance is one half. Benchmarking against competitors is the other half.

Tracking your own performance is one half. Benchmarking against competitors is the other half.

Step 1: Identify Your Competitive Set

List your 3-5 main competitors. For each one, add queries like:

- "[Company name] alternatives"

- "[Company name] vs [your company]"

- "[Company name] review"

- "[Competitor 1] vs [Competitor 2]"

Step 2: Test Competitive Inclusion

For your 50 core queries, document:

- Who gets mentioned alongside you?

- How frequently is each competitor mentioned?

- In what position (early, middle, late)?

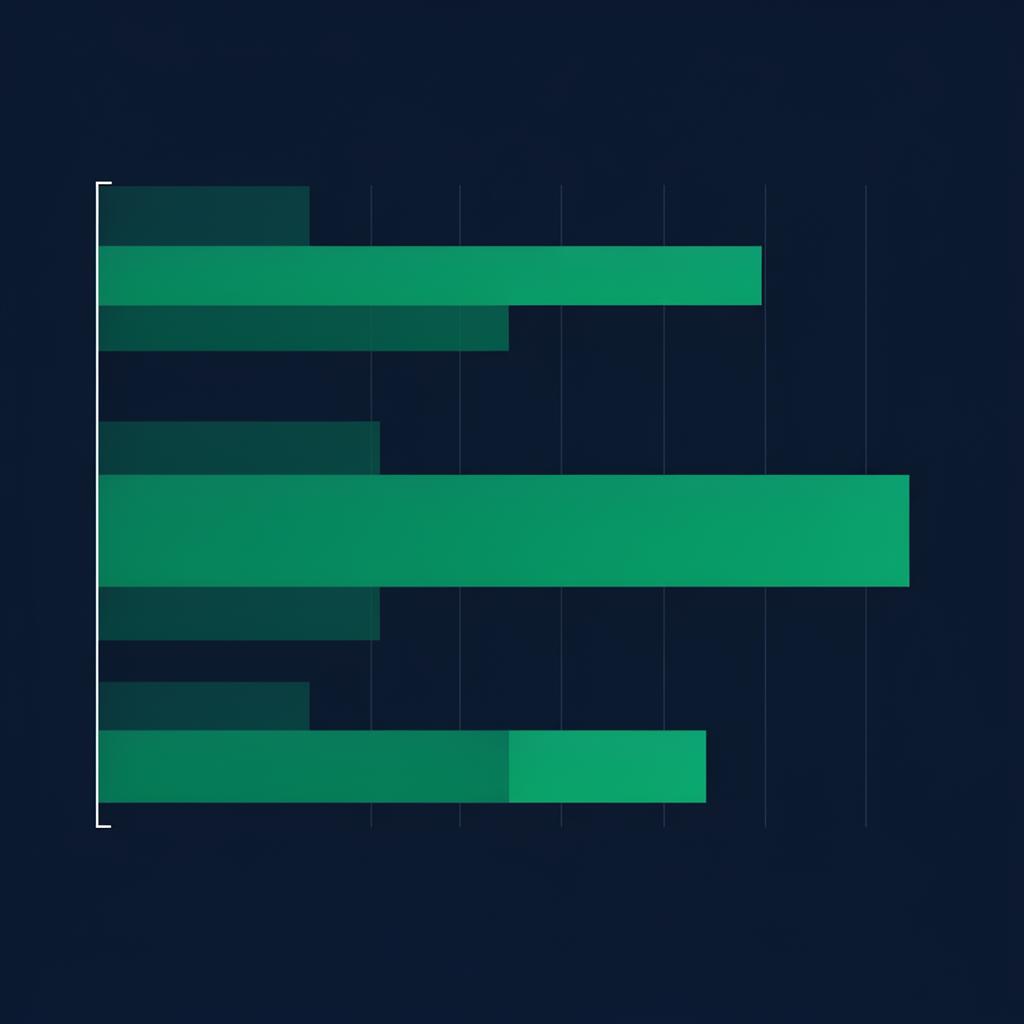

Step 3: Create a Competitive Matrix

Build a simple table:

| Query | Your Company | Competitor A | Competitor B | Competitor C |

|---|---|---|---|---|

| Best demand gen tools | Mentioned (pos 2) | Mentioned (pos 1) | Not mentioned | Mentioned (sources) |

| Demand gen platforms | Mentioned (pos 1) | Not mentioned | Mentioned (pos 2) | Mentioned (pos 3) |

| DG software comparison | Mentioned (sources) | Mentioned (pos 2) | Mentioned (pos 1) | Not mentioned |

This matrix reveals:

- Which competitors dominate which query types

- Where you're weak relative to competitors

- Opportunities (queries where no one's dominating)

- Market positioning (are you in "premium" mentions or "also-ran" mentions?)

Step 4: Analyse Competitive Patterns

Look for patterns:

- Do certain competitors always get mentioned first? (They likely have stronger brand authority)

- Are there query categories where you do well but others don't? (Your content niche)

- Which competitors are you most frequently "versus'd" against? (Your true competitive set)

Interpreting Your Results

Raw mention counts matter, but context matters more.

Raw mention counts matter, but context matters more.

Interpretation: Mention Rate vs. Recommendation Quality

Two different scenarios:

Scenario A: You're mentioned in 70% of queries, but usually as "also consider" in the sources list.

Scenario B: You're mentioned in 30% of queries, but always as the primary recommendation with specific use case fit.

Scenario B is likely better. Fewer, higher-quality mentions drive more pipeline than many low-quality mentions.

Metrics to Track

Beyond simple mention rate, track:

- Mention Rate: % of queries where you're mentioned

- Position Score: Are you in the first mention (high value) or sources list (lower value)?

- Competitive Win Rate: % of "vs competitor" queries where you're chosen

- Feature Mentions: Are specific, differentiating features mentioned about your company?

- Intent Alignment: For high-intent queries, what's your mention rate? (Higher-intent queries matter more)

Diagnostic Questions

When you see results, ask:

- Why are we missing from certain query types? (Lack of content? Content isn't discoverable? Content quality issue?)

- When we're mentioned, how are we described? (Accurately? Generic description? Niche perception?)

- How does our mention pattern differ from competitors? (They dominate in certain categories; we dominate in others?)

- Has our mention rate changed over time? (Are our recent content changes helping?)

Common Patterns and What They Mean

Pattern 1: "We're mentioned in comparison queries but not in category definition queries" → Problem: Low brand awareness. Solution: Create category-defining content that establishes what you do.

Pattern 2: "We're mentioned only in our niche (e.g., 'demand gen for mid-market SaaS') but not in general demand gen queries" → Problem: Low general category authority. Solution: Create general-purpose content that addresses the broader category.

Pattern 3: "We're mentioned but always as an also-ran, never as primary recommendation" → Problem: Weak differentiation or weak E-E-A-T signals. Solution: Strengthen authority and unique value props.

Pattern 4: "We're mentioned frequently but still not driving pipeline" → Problem: Wrong audience or mention context. Solution: Track whether mentions are reaching your target audience.

Scaling Your Monitoring

As you build a monitoring practice, you'll face questions about scale:

As you build a monitoring practice, you'll face questions about scale:

How many queries should I track?

Start with 50. Once that's sustainable (monthly testing of 50 queries takes 3-4 hours), expand to 100-150. Beyond 200 queries, manual testing becomes impractical unless you're automating.

How often should I test?

Weekly for your top 10-20 priority queries (highest intent, most strategic). Monthly for your full query set. Quarterly for expanded query sets.

Should I automate this?

Eventually, yes. Current tool options are limited, but emerging platforms are addressing this gap. If you can spare $500-1,000/month, some vendors offer API access for large-scale LLM testing.

For now, the best automation is:

- Standardised testing templates (makes manual testing faster)

- Batch testing (set aside 4 hours on the first Monday of each month for full testing)

- Spreadsheet automation (use formulas to calculate mention rates, trends, etc.)

How do I handle model updates?

When ChatGPT or other platforms release new model versions, your baseline may shift. Document model versions in your testing. When a new version launches, re-test your baseline to understand the shift. Then resume normal tracking.

What if I compete in multiple categories?

Build separate query sets for each category. A company selling both demand generation software and marketing analytics might have two sets of 50 queries each, tested independently. This lets you understand your position in each market separately.

Frequently Asked Questions

On this page

Ross Williams

Founder, Fortitude Media

Ross Williams is the founder of Fortitude Media, specialising in AI visibility and content strategy for B2B companies.

Connect on LinkedInShare this article