How to Compare AI Optimisation Providers

What to look for and avoid. Three-pillar requirement, pricing models, contract structures, reporting standards, red flags.

Why Comparison Matters

The market for "AI optimization" services has exploded. Everyone from traditional digital agencies to startup consultants to SEO shops suddenly claims expertise in AI visibility, AI SEO, and AI-optimized content.

The market for "AI optimization" services has exploded. Everyone from traditional digital agencies to startup consultants to SEO shops suddenly claims expertise in AI visibility, AI SEO, and AI-optimized content.

This is confusing for buyers. How do you evaluate whether Provider A is genuinely good or just using trendy language? How do you know if their pricing is reasonable? What differentiates a provider worth $10,000/month from one that should cost $3,000/month?

This guide helps you navigate those questions.

The underlying principle: Most "AI optimization" services are mediocre or ineffective because they're missing critical components. A good provider isn't just better in one dimension. They're complete across three dimensions simultaneously.

The Three-Pillar Requirement

Before evaluating any specific provider, understand what actually works in AI optimization. It requires three simultaneous elements.

Before evaluating any specific provider, understand what actually works in AI optimization. It requires three simultaneous elements. If a provider is strong in one or two but weak in the third, results will be suboptimal.

Pillar 1: Technical Optimization

Your website and content infrastructure needs to be optimized for how AI systems crawl, consume, and evaluate information.

This includes:

Content structure optimization

- Semantic HTML markup that makes intent and meaning clear

- Proper heading hierarchy and content organization

- Schema markup for domain-specific information

- Accessibility standards that help AI systems parse content

Information architecture

- Clear navigation that shows topic relationships

- Internal linking that helps AI systems understand context

- Topic clusters that demonstrate expertise depth

- Content hierarchies that match how AI systems evaluate authority

Performance and indexing

- Fast page load times (impacts both AI crawling and user experience)

- Proper sitemap configuration

- Robots.txt optimization for AI crawlers

- API-based content delivery for systems that consume content programmatically

Domain authority signals

- Structured data markup that makes company/author information clear

- Author bios and credentials that establish expertise

- Company information and contact details

- Trust signals (security, privacy, verifiable claims)

A provider strong in technical optimization can audit your current state, identify gaps, and remediate them. You should be able to ask them: "What specific changes will you make to our technical implementation, and why?"

Pillar 2: Content Production

Content is the primary way AI systems learn what you know. More content (on the right topics) = more opportunities for recommendation.

But not all content is equal. Content production requires:

Topic strategy

- Identifying high-opportunity topics that AI systems ask about frequently

- Mapping topics to your expertise and business value

- Creating topic clusters that demonstrate depth in key areas

- Prioritizing topics by recommendation likelihood and business impact

Content quality

- Original research and insights (not just rephrasing competitors)

- Comprehensive coverage (longer content typically performs better in AI)

- Authority signaling (citations, data, expert opinions)

- Freshness and timeliness (AI systems weight recent content higher)

Publication strategy

- Timing of publication (when topics are being discussed in AI systems)

- Co-publication and syndication (appearing in multiple authoritative contexts)

- Updating and refreshing strategy (keeping content current and relevant)

- Format optimization (blog posts, guides, research, case studies, etc.)

A strong content producer can show you:

- A content calendar with specific topics and publication dates

- Performance data showing which content types and topics perform best in AI recommendations

- Competitive research showing gaps they're filling that competitors aren't

- Update and refresh strategy based on performance data

Pillar 3: Earned Media and PR

The third pillar is often overlooked, but it's critical: PR and earned media mentions.

AI systems weight authoritative sources heavily. When industry publications mention your company, when analysts quote you, when other experts cite your work—all of this feeds into the training data that AI systems use to recommend you.

PR and earned media also:

Create backlink opportunities

- Press coverage includes links back to your website

- Interview participation creates diverse linking domains

- Award nominations and recognitions drive links

- Speaking engagements and conference presence create linking opportunities

Establish authority signals

- Media mentions signal that others find you credible enough to quote

- Expert positioning in publications builds authority

- Industry recognition and awards signal credibility

- Analyst mentions and briefings establish thought leadership

Drive direct referral traffic

- PR coverage generates click-through traffic

- Interview appearances drive podcast and publication traffic

- Social sharing of earned media creates secondary distribution

A strong PR provider can show you:

- Specific publications they have relationships with

- Track record of securing coverage (case studies or examples)

- Understanding of your category and key journalists/outlets

- Strategy for earned media that creates both AI visibility and business results

Evaluating Pillar Completeness

When you're evaluating a provider, ask directly: "How do you approach each of these three pillars?"

Look for:

- Depth, not breadth: They should go deep in all three, not surface level

- Evidence: They should show examples or case studies of past work

- Strategy: They should have a clear methodology for each pillar, not generic "best practices"

- Integration: They should explain how the three pillars work together, not in isolation

Red flag: A provider that's strong in two pillars but weak or vague in the third. Results will be limited by the weakest component.

Red Flags in Provider Positioning

Certain claims and positioning are warning signs that a provider doesn't fully understand AI optimization.

Certain claims and positioning are warning signs that a provider doesn't fully understand AI optimization.

Red Flag #1: "Our AI SEO service will improve your Google rankings"

AI optimization and Google SEO are related but different. A provider that conflates them is confused.

Google still primarily ranks on traditional SEO signals: backlinks, user engagement, content relevance. AI systems like ChatGPT don't use Google's ranking algorithm at all. They use training data, retrieval-augmented generation, and system prompts.

A provider should be clear: We optimize for AI systems like ChatGPT, Claude, Perplexity, and others. This may help with Google ranking, but that's not our primary objective.

If they say "our AI SEO will boost Google rankings," they're probably just repackaging traditional SEO and calling it AI optimization.

Red Flag #2: "We use AI to write all your content"

AI-generated content at scale is a commodity. So is everyone else's. A provider using only AI-generated content is producing the same content as your competitors who use the same AI tools.

Content that stands out in AI recommendations comes from human expertise, original research, unique perspectives, and domain knowledge. AI tools are useful for scaling and distribution, but the core insight needs to be human-generated.

A provider should say: We use AI tools to help create and optimize content, but all content is grounded in human expertise and original research. We can show you the research, interviews, and expertise that went into each piece.

Red Flag #3: "Our pricing is based on guaranteed rankings/leads"

Guarantees in AI optimization are meaningless because AI systems are not transparent and change constantly. A provider that guarantees "10% improvement in AI citations" or "guaranteed leads from AI" is either lying or will deliver empty promises.

Good providers commit to effort and process: "We'll publish X pieces of content per month, secure Y pieces of PR coverage, and make Z technical improvements." They should be accountable to these metrics.

They should not guarantee specific AI recommendation results because no one can control what ChatGPT or Claude recommends.

Red Flag #4: "Cheap AI optimization at half the price"

AI optimization done well requires expertise in content, PR, and technical optimization. This is specialized work. If a provider is significantly cheaper than market rate ($3,000/month vs. $10,000/month), they're either:

- Cutting corners on one of the three pillars

- Using entirely AI-generated content without human expertise

- Not doing real PR work (just "outreach automation")

- Using templated technical optimization with no strategic customization

Price isn't a guarantee of quality, but suspiciously cheap providers are almost always cutting corners.

Red Flag #5: "We'll get you into ChatGPT's training data"

You can't "get into" ChatGPT's training data. It was trained on data from April 2024 (for GPT-4) and specific cutoff dates for each model version. You can't retroactively add new content to past training data.

What you can do is:

- Optimize your content so when AI systems retrieve it (RAG—retrieval-augmented generation), they find your best work

- Build authority and backlinks that influence future model training

- Ensure your company appears in reliable reference sources that future models will use

A provider claiming they can "get you into ChatGPT's training data" is either confused or being deceptive.

Red Flag #6: "One-time optimization is all you need"

AI optimization isn't solved once. It requires continuous work. A provider suggesting one-time optimization is selling a one-time project, not AI optimization success.

Healthy providers frame this as: "Month 1-3 is foundation. Month 4-24 is continuous improvement and compounding results."

Evaluating Expertise Depth

The providers worth working with have deep, specific expertise in AI optimization. This manifests in several ways.

The providers worth working with have deep, specific expertise in AI optimization. This manifests in several ways.

Their Content About AI Optimization

Look at how the provider writes about their own expertise. Do they publish guides, case studies, and thought leadership about AI optimization?

This matters because:

- It shows they understand the domain deeply enough to write about it publicly

- It demonstrates that they practice what they preach (they should be optimized for AI recommendations themselves)

- It shows they're staying current with the rapidly evolving landscape

Fortitude Media, for example, publishes regularly on AI visibility, AI strategy, and measurement. This grounds their work in current understanding.

A provider with no public material on AI optimization might be competent, but you have no way to verify it.

Their Understanding of Your Category

During sales conversations, a good provider should ask questions about:

- Your specific category and how buyers research solutions

- Your competitors and how they're positioned in AI recommendations

- Your current AI visibility (they should have tested it)

- Your business model and what "success" actually means for you

A bad provider gives a generic pitch that could apply to any company. A good provider gives a customized pitch that shows category expertise.

Case Studies and Examples

Ask for case studies. Not "we worked with a company and they got 40% more leads" (vague). But specific examples showing:

- Starting position (AI citation frequency: 5%)

- Specific work done (technical changes, content topics, PR outlets)

- Specific results (AI citation frequency: 20%, new leads per month, measurable business impact)

If a provider can't show specific examples with numbers, be skeptical.

References You Can Call

Good providers should offer multiple references willing to discuss their work. Not just success stories, but also neutral references who can tell you what was good and what could have been better.

Ask references:

- "Did they deliver what they promised?"

- "Were results meaningful to your business?"

- "How responsive were they to changes and adjustments?"

- "Would you hire them again?"

Pricing Models and Contract Structures

Pricing for AI optimization varies widely. Understanding what you're paying for and why is critical.

Pricing for AI optimization varies widely. Understanding what you're paying for and why is critical.

Monthly Retainer vs. Project-Based

Monthly retainer: $8,000-$25,000/month depending on scope and scale

- Includes continuous content, PR, technical optimization

- Monthly deliverables and reporting

- Full team expertise

- Flexibility to adjust scope month-to-month

Project-based: $50,000-$200,000 for a defined 3-6 month engagement

- Usually includes initial strategy, content production, PR campaign

- Less flexibility to adjust scope

- Ends at a defined point (then you need to hire for ongoing work)

For AI optimization, monthly retainer is almost always better than project-based (see article on amortization for why). But if you're testing a provider or have a limited budget, project-based is a reasonable starting point.

Pricing Tiers

Good providers usually offer tiered pricing:

Tier 1 (Foundation): $6,000-$10,000/month

- Content production (8-12 pieces per month)

- Basic PR support

- Quarterly reporting

- For small companies or proof-of-concept

Tier 2 (Standard): $12,000-$18,000/month

- Comprehensive content production (15-20 pieces)

- Ongoing PR and earned media

- Monthly reporting and optimization

- Technical audit and improvements

- For most B2B companies

Tier 3 (Aggressive): $20,000-$35,000+/month

- High-volume content production (25+ pieces)

- Dedicated PR and analyst relations

- Weekly reporting and optimization

- Continuous technical enhancement

- For competitive markets or growth-focused companies

Make sure you understand what each tier includes. The cheapest tier isn't necessarily bad, but ensure it includes meaningful work in all three pillars.

Contract Terms

Good practices:

- Month-to-month flexibility: You should be able to cancel with 30-60 days' notice if results aren't materializing

- Clear scope: The contract should specify exactly what you get each month

- Performance benchmarks: There should be metrics you're both accountable to

- Escalation and adjustment: The contract should allow for changing scope and focus as results show what's working

Red flags:

- Long-term contracts (12+ months) with no flexibility

- Vague scope descriptions ("premium marketing and content services")

- No defined performance metrics

- Penalty clauses for cancellation

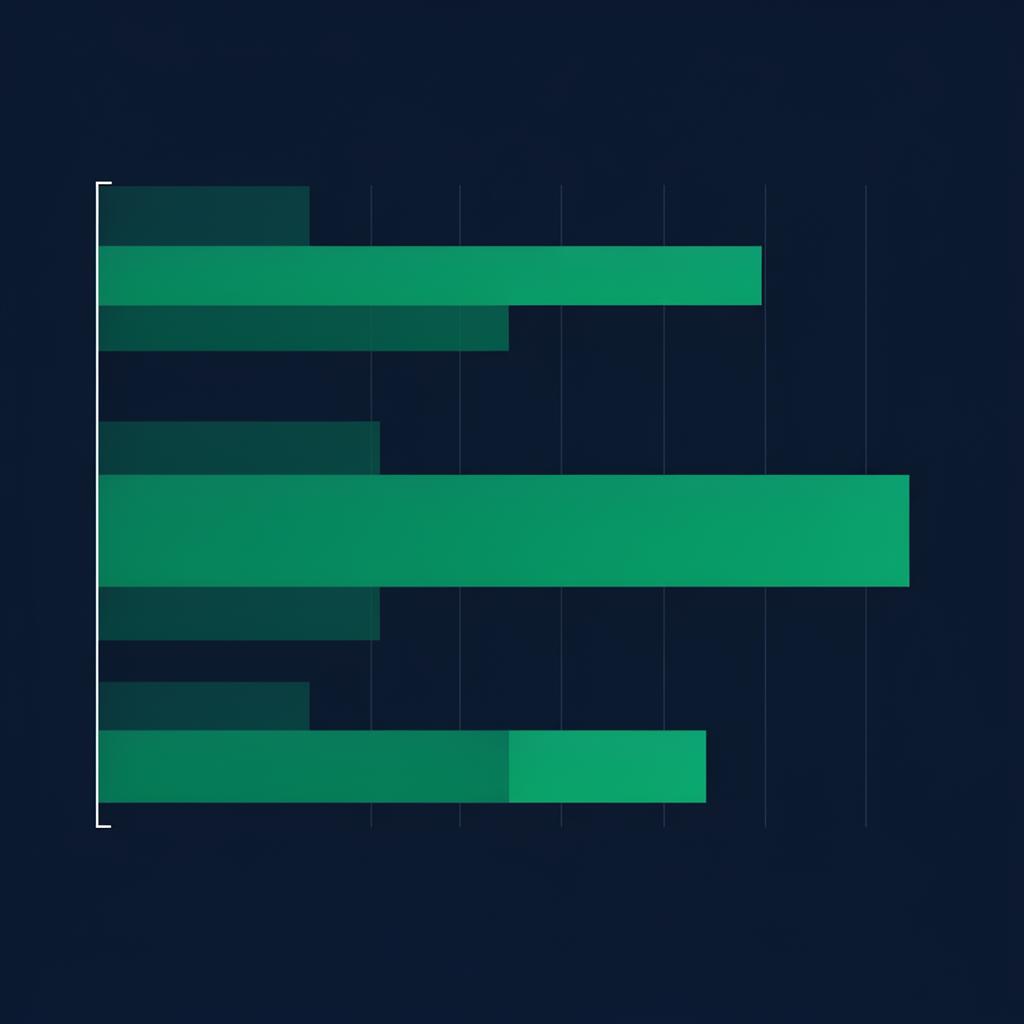

Reporting Standards and Measurement

This is crucial. A provider is only as good as their ability to measure and report on results.

This is crucial. A provider is only as good as their ability to measure and report on results.

What Good Reporting Looks Like

Monthly report includes:

Content performance:

- Pieces of content published

- Topic focus and reasoning

- Early engagement metrics

- AI citation frequency on new content (if measurable)

PR and earned media:

- Coverage secured (publications, headlines, links)

- Reach and audience size

- Backlinks generated

- Social mentions and sentiment

Technical optimization:

- Changes made and reasoning

- Crawlability improvements

- Core Web Vitals and performance metrics

- Authority signal improvements

Business impact:

- New leads attributed to AI optimization

- Traffic changes (AI-originated vs. search vs. other)

- Competitive positioning shifts

- Actionable recommendations for next month

Quarterly deep-dive includes:

- Competitive positioning analysis (how you stack up against competitors)

- Trend analysis (what's working, what needs adjustment)

- Strategic recommendations for next quarter

- Updated roadmap based on learnings

What Bad Reporting Looks Like

- "Impressions" or "reach" without conversion or business context

- No distinction between content published vs. content performing

- PR coverage that's not tied to business impact

- No competitive benchmarking

- Generic month-to-month reports with no strategic insight

Ask a prospective provider: "Can you show me an example of your monthly reporting?" If it's generic or vague, move on.

Measurement Standards

The provider should be clear about how they measure AI visibility. Standard approaches include:

Testing and monitoring: Running regular queries through ChatGPT, Claude, Perplexity, and other systems to count mentions and recommendations

Search integration monitoring: Tracking how often your content appears in AI-powered search results

Backlink and authority monitoring: Tracking links and mentions that feed into AI training data

Lead attribution: Using UTM parameters, tracking IDs, and customer research to identify AI-originated leads

They should be transparent about which metrics they can reliably measure and which are estimates or proxies.

Reference Checks and Track Record

Before committing to a provider, talk to people who've worked with them.

Before committing to a provider, talk to people who've worked with them.

What to Ask

"Tell me about your engagement with [Provider]."

- How long did you work with them?

- What was the scope of the project?

- What specific results did you see?

- What surprised you, positively or negatively?

- Would you hire them again? Why or why not?

"Where did you see the biggest impact?"

- Which pillar (content, PR, technical) drove the most results?

- How long did it take to see meaningful results?

- Was the timeline realistic?

"What could have been better?"

- Where did they fall short?

- How did they respond to feedback?

- Were there any disappointments?

"What's the relationship like?"

- How responsive are they?

- Do they understand your business?

- Are they easy to work with?

Red Flags in References

- Vague responses ("they did good work")

- Inability to point to specific results

- Complaints about responsiveness or communication

- Regrets about the engagement or hesitation about recommending

Checking Track Record

Good providers should be able to show:

- Years in the space (not necessarily "since 2020," but enough experience to understand what works)

- Client roster (even if confidential, they should name some publicly)

- Published case studies with real numbers

- Thought leadership and content they've published

- Industry recognition or awards

The Trial Approach

If you're uncertain which provider to choose, consider starting with a limited trial engagement rather than a full commitment.

If you're uncertain which provider to choose, consider starting with a limited trial engagement rather than a full commitment.

Trial Structure

- Duration: 3 months (enough to see initial results)

- Scope: Tier 1 or limited Tier 2 (foundation level)

- Goals: Establish baseline, test methodology, validate approach

- Exit clause: Clear path to expand or end engagement

What You're Testing

- Do they deliver what they promise?

- Is their reporting clear and useful?

- Are they responsive and collaborative?

- Are there early signs of results (even if not full pipeline impact yet)?

Success Criteria for Trial

- They publish content on committed schedule

- They secure at least some earned media coverage

- They deliver monthly reports on time with actionable insights

- You see measurable changes in AI citation frequency (even if modest)

- They're responsive to feedback and willing to adjust approach

If these criteria are met after 3 months, it's usually worth extending to 12 months for real compounding results.

Frequently Asked Questions

On this page

Ross Williams

Ross Williams is the founder of Fortitude Media, specialising in AI visibility and content strategy for B2B companies.

Share this article